I would have added more options if I'd been able to continue.Īll of which is completely besides the point. The secondary detection clip is so you can use a different denoising process for the comparison. The point of that is that you can provide a denoised clip for detection purposes without needing the denoising applied to the output. The difference for your purposes would be the ability to provide a separate clip for detection purposes or a second one if desired. ReDup IS DeDup, you can use it to create a VFR MKV just like you can with DeDup.

#Dedup avisynth plus

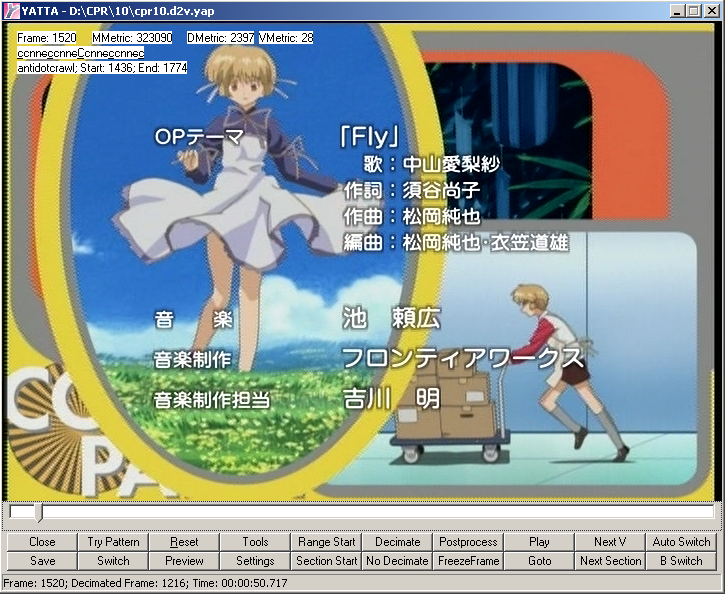

IVTC’d frames are NOT exactly the same, the MPEG encoding process plus the IVTC’d leaves the "duplicate" frames slightly different so ExactDeDup doesn't touch them. (BTW this issue already has been mentioned by GearlessGoG as well).įor now this is the major problem for me.ExactDeDup removes frames that are EXACTLY the same. Well, this is not big difference for the ~4 sec video, but can you imagine what would be the out of sync value for video, let's say 1 hr. frame #27 - duplicate of the frame # 26īeside of that the length of the processed video (4s 789ms) is different than the length of original (4s 71ms).frames #17,18 - duplicates of the frame # 16.frames #5,6 - duplicates of the frame #4.

Processed video, instead of replaces duplicated frames by interpolated ones, not only leave them untouched but even increases duplicated frames with some weird irregular pattern: processed by "Flowframes" with "Remove Duplicated Frames After Extraction, Threshold 10%. frame #24 - duplicate of the frame # 23Īs you can notice this is a obvious pattern for telecined video(23.976 to 29.97), that can be easily re-constructed back to 23.974 using standard pulldown removal procedure in the most NLE (Avisynth "MultiDecimate" can be used as well).frame #19 - duplicate of the frame # 18.frame #14 - duplicate of the frame # 13.This video consists duplicated frames in the following pattern: So, let's take a look at original video that I already mentioned before: Whatever I did I still couldn't get successful result. The major part of my wishlist for now is to mange proper frame de-duplication. Sure this a bit crazy approach, however this is the only way I found, so far, in order to get things to work. Output your video in your preferred format. Replace distorted frames on scene cuts with corresponded frames from folder "scenes".Ħ. Bring *.png's from "interp" folder in to your NLE with proper frame rate interpretation.ĥ.

#Dedup avisynth mp4

"Brutally" intercept "Flowframes" upon finishing interpolation and starting mp4 encoding.Ĥ. Adjust parameters and start interpolationģ. Check "Don't Delete Temp Folder After Interpolation" in the "Settings" - > "General"Ģ. Workaround: (probably a bit dirty, but so far no other choice)ġ. Wasted more than 3hrs to output stream format that doesn't satisfy my needs, just in order to get stream with proper scene detection output. ( your app using ffmpeg - what kind of "encoding options are exclusive to the MP4 container" ffmpeg presumes?)Īnd that encoding (to the mp4 format that I do not need) took THREE !!! times more than interpolation procedure by itself. In order to get proper scene cuts frame insertion and get the best possible quality of video output, I HAVE to process mp4 encoding with CRF0 (that I don't really need), because "some of the encoding options are exclusive to the MP4 container" So, the interpolation by itself took 02:20:03 Video FPS: 23.976 - Total Number Of Frames: 10481Įxtracting video frames from input video.įrame=10481 fps= 42 L time=00:07:15.22 speed=1.75x Generating timecodes. Here the result of my very last test that looks even more ridiculous : Mandatory to encode in mp4 (in order to properly handle correct scene changes) negates all the benefits of your application, increasing unnecessary total processing time (to output in non requested format) more than twice.